Examples of Preprocessing and Models

Source:vignettes/preprocessing-and-models.Rmd

preprocessing-and-models.RmdIntroduction

The epipredict package utilizes the

tidymodels framework, namely {recipes} for dplyr-like pipeable sequences of

feature engineering and {parsnip} for a

unified interface to a range of models.

epipredict has additional customized feature engineering

and preprocessing steps, such as step_epi_lag(),

step_population_scaling(), step_epi_naomit().

They can be used along with steps from the recipes

package for more feature engineering.

In this vignette, we will illustrate some examples of how to use

epipredict with recipes and

parsnip for different purposes of epidemiological

forecasting. We will focus on basic autoregressive models, in which

COVID cases and deaths in the near future are predicted using a linear

combination of cases and deaths in the near past.

The remaining vignette will be split into three sections. The first section, we will use a Poisson regression to predict death counts. In the second section, we will use a linear regression to predict death rates. Last but not least, we will create a classification model for hotspot predictions.

Poisson Regression

During COVID-19, the US Center for Disease Control and Prevention (CDC) collected models and forecasts to characterize the state of an outbreak and its course. They use it to inform public health decision makers on potential consequences of deploying control measures.

One of the outcomes that the CDC forecasts is death

counts from COVID-19. Although there are many state-of-the-art

models, we choose to use Poisson regression, the textbook example for

modeling count data, as an illustration for using the

epipredict package with other existing tidymodels

packages.

The counts_subset dataset is available in the epidatasets

package), and contains the number of confirmed cases and deaths from

June 4, 2021 to Dec 31, 2021 in some U.S. states. It can be loaded

with:

x <- epidatasets::counts_subsetThe data can also be fetched from the Delphi API with the following query:

library(epidatr)

d <- as.Date("2024-03-20")

x <- pub_covidcast(

source = "jhu-csse",

signals = "confirmed_incidence_num",

time_type = "day",

geo_type = "state",

time_values = epirange(20210604, 20211231),

geo_values = "ca,fl,tx,ny,nj",

as_of = d

) %>%

select(geo_value, time_value, cases = value)

y <- pub_covidcast(

source = "jhu-csse",

signals = "deaths_incidence_num",

time_type = "day",

geo_type = "state",

time_values = epirange(20210604, 20211231),

geo_values = "ca,fl,tx,ny,nj",

as_of = d

) %>%

select(geo_value, time_value, deaths = value)

x <- full_join(x, y, by = c("geo_value", "time_value")) %>%

as_epi_df(as_of = d)We wish to predict the 7-day ahead death counts with lagged cases and deaths. Furthermore, we will let each state be a dummy variable. Using differential intercept coefficients, we can allow for an intercept shift between states.

The model takes the formwhere , and is assumed to follow a Poisson distribution with mean ; are dummy variables for each state and take values of either 0 or 1.

Preprocessing steps will be performed to prepare the data for model

fitting. But before diving into them, it will be helpful to understand

what roles are in the recipes framework.

Aside on recipes

recipes can assign one or more roles to each column in

the data. The roles are not restricted to a predefined set; they can be

anything. For most conventional situations, they are typically

“predictor” and/or “outcome”. Additional roles enable targeted

step_*() operations on specific variables or groups of

variables.

In our case, the role predictor is given to explanatory

variables on the right-hand side of the model (in the equation above).

The role outcome is the response variable that we wish to

predict. geo_value and time_value are

predefined roles that are unique to the epipredict package.

Since we work with epi_df objects, all datasets should have

geo_value and time_value passed through

automatically with these two roles assigned to the appropriate columns

in the data.

The recipes package also allows manual

alterations of roles in bulk. There are a few handy functions that

can be used together to help us manipulate variable roles easily.

update_role()alters an existing role in the recipe or assigns an initial role to variables that do not yet have a declared role.

add_role()adds an additional role to variables that already have a role in the recipe, without overwriting old roles.

remove_role()eliminates a single existing role in the recipe.

End aside

Notice in the following preprocessing steps, we used

add_role() on geo_value_factor since,

currently, the default role for it is raw, but we would

like to reuse this variable as predictors.

counts_subset <- counts_subset %>%

mutate(geo_value_factor = as.factor(geo_value)) %>%

as_epi_df()

epi_recipe(counts_subset)

#>

#> ── Epi Recipe ───────────────────────────────────────────────────────────────

#>

#> ── Inputs

#> Number of variables by role

#> raw: 3

#> geo_value: 1

#> time_value: 1

r <- epi_recipe(counts_subset) %>%

add_role(geo_value_factor, new_role = "predictor") %>%

step_dummy(geo_value_factor) %>%

## Occasionally, data reporting errors / corrections result in negative

## cases / deaths

step_mutate(cases = pmax(cases, 0), deaths = pmax(deaths, 0)) %>%

step_epi_lag(cases, deaths, lag = c(0, 7)) %>%

step_epi_ahead(deaths, ahead = 7, role = "outcome") %>%

step_epi_naomit()After specifying the preprocessing steps, we will use the

parsnip package for modeling and producing the prediction

for death count, 7 days after the latest available date in the

dataset.

latest <- get_test_data(r, counts_subset)

wf <- epi_workflow(r, parsnip::poisson_reg()) %>%

fit(counts_subset)

predict(wf, latest) %>% filter(!is.na(.pred))

#> An `epi_df` object, 5 x 3 with metadata:

#> * geo_type = state

#> * time_type = day

#> * as_of = 2024-03-20

#>

#> # A tibble: 5 × 3

#> geo_value time_value .pred

#> <chr> <date> <dbl>

#> 1 ca 2021-12-31 108.

#> 2 fl 2021-12-31 270.

#> 3 nj 2021-12-31 22.5

#> 4 ny 2021-12-31 94.8

#> 5 tx 2021-12-31 91.0Note that the time_value corresponds to the last

available date in the training set, NOT to the target

date of the forecast (2022-01-07).

Let’s take a look at the fit:

extract_fit_engine(wf)

#>

#> Call: stats::glm(formula = ..y ~ ., family = stats::poisson, data = data)

#>

#> Coefficients:

#> (Intercept) geo_value_factor_fl geo_value_factor_nj

#> 3.970e+00 -1.487e-01 -1.425e+00

#> geo_value_factor_ny geo_value_factor_tx lag_0_cases

#> -6.865e-01 3.025e-01 1.339e-05

#> lag_7_cases lag_0_deaths lag_7_deaths

#> 1.717e-06 1.731e-03 8.566e-04

#>

#> Degrees of Freedom: 984 Total (i.e. Null); 976 Residual

#> Null Deviance: 139600

#> Residual Deviance: 58110 AIC: 62710Up to now, we’ve used the Poisson regression to model count data. Poisson regression can also be used to model rate data, such as case rates or death rates, by incorporating offset terms in the model.

To model death rates, the Poisson regression would be expressed as:where is the log of the state population that was used to scale the count data on the left-hand side of the equation. This offset is simply a predictor with coefficient fixed at 1 rather than estimated.

There are several ways to model rate data given count and population

data. First, in the parsnip framework, we could specify the

formula in fit(). However, by doing so we lose the ability

to use the recipes framework to create new variables since

variables that do not exist in the original dataset (such as, here, the

lags and leads) cannot be called directly in fit().

Alternatively, step_population_scaling() and

layer_population_scaling() in the epipredict

package can perform the population scaling if we provide the population

data, which we will illustrate in the next section.

Linear Regression

For COVID-19, the CDC required submission of case and death count

predictions. However, the Delphi Group preferred to train on rate data

instead, because it puts different locations on a similar scale

(eliminating the need for location-specific intercepts). We can use a

liner regression to predict the death rates and use state population

data to scale the rates to counts.1 We will do so using

layer_population_scaling() from the epipredict

package.

Additionally, when forecasts are submitted, prediction intervals

should be provided along with the point estimates. This can be obtained

via postprocessing using layer_residual_quantiles(). It is

worth pointing out, however, that

layer_residual_quantiles() should be used before population

scaling or else the transformation will make the results

uninterpretable.

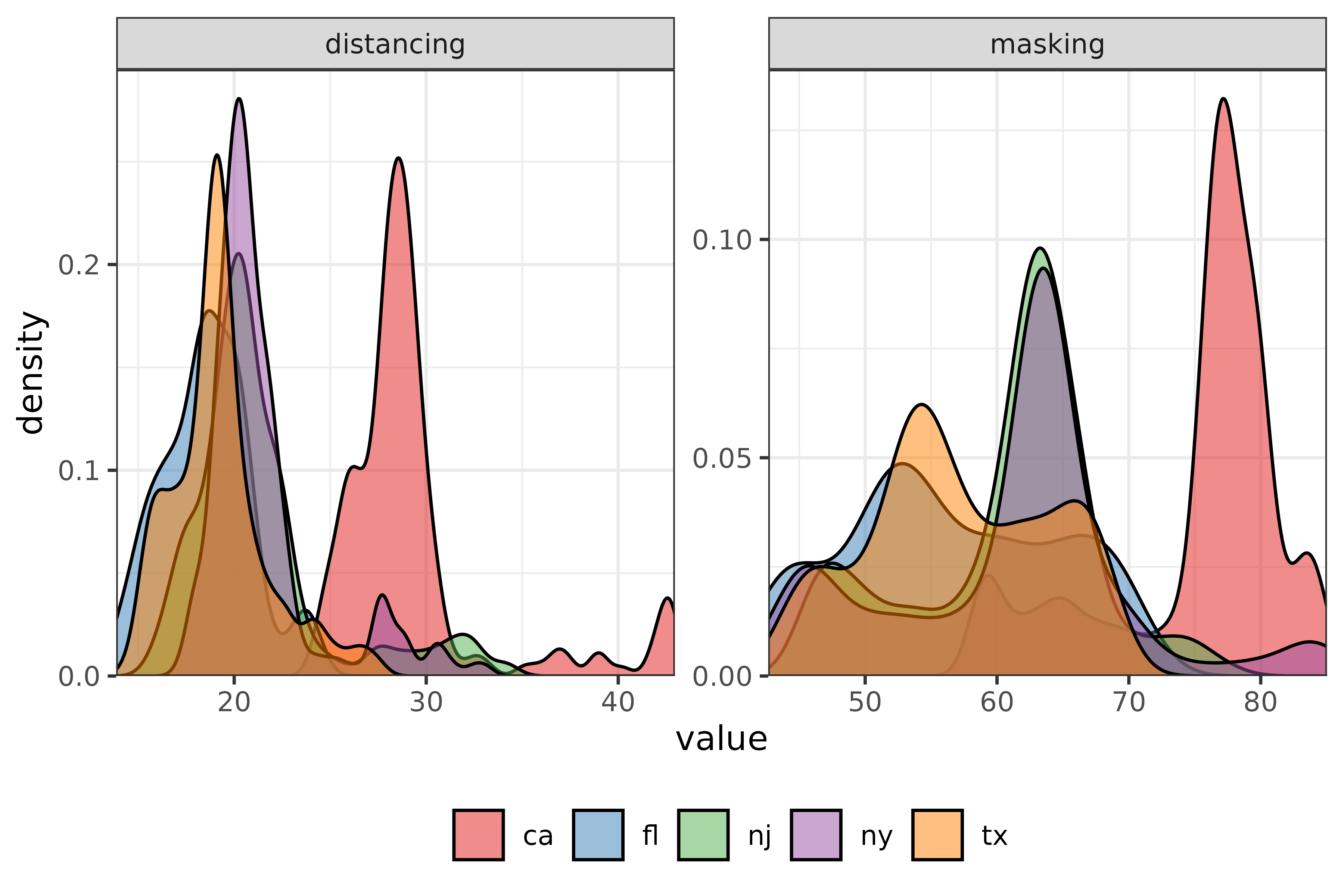

We wish, now, to predict the 7-day ahead death counts with lagged case rates and death rates, along with some extra behaviourial predictors. Namely, we will use survey data from COVID-19 Trends and Impact Survey.

The survey data provides the estimated percentage of people who wore a mask for most or all of the time while in public in the past 7 days and the estimated percentage of respondents who reported that all or most people they encountered in public in the past 7 days maintained a distance of at least 6 feet.

State-wise population data from the 2019 U.S. Census will be used in

layer_population_scaling().

Both datasets are available in the epidatasets

package), and can be loaded with:

behav_ind <- epidatasets::ctis_covid_behaviours

pop_dat <- epidatasets::state_census %>% select(abbr, pop)The data can also be fetched from the Delphi API with the following query:

library(epidatr)

d <- as.Date("2024-03-20")

behav_ind_mask <- pub_covidcast(

source = "fb-survey",

signals = "smoothed_wwearing_mask_7d",

time_type = "day",

geo_type = "state",

time_values = epirange(20210604, 20211231),

geo_values = "ca,fl,tx,ny,nj",

as_of = d

) %>%

select(geo_value, time_value, masking = value)

behav_ind_distancing <- pub_covidcast(

source = "fb-survey",

signals = "smoothed_wothers_distanced_public",

time_type = "day",

geo_type = "state",

time_values = epirange(20210604, 20211231),

geo_values = "ca,fl,tx,ny,nj",

as_of = d

) %>%

select(geo_value, time_value, distancing = value)

behav_ind <- behav_ind_mask %>%

full_join(behav_ind_distancing, by = c("geo_value", "time_value")) %>%

as_epi_df(as_of = d)

pop_dat <- state_census %>% select(abbr, pop)Rather than using raw mask-wearing / social-distancing metrics, for the sake of illustration, we’ll convert both into categorical predictors.

We will take a subset of death rate and case rate data from the

built-in dataset covid_case_death_rates.

jhu <- filter(

covid_case_death_rates,

time_value >= "2021-06-04",

time_value <= "2021-12-31",

geo_value %in% c("ca", "fl", "tx", "ny", "nj")

)Preprocessing steps will again rely on functions from the

epipredict package as well as the recipes

package. There are also many functions in the recipes

package that allow for scalar

transformations, such as log transformations and data centering. In

our case, we will center the numerical predictors to allow for a more

meaningful interpretation of the intercept.

jhu <- jhu %>%

mutate(geo_value_factor = as.factor(geo_value)) %>%

left_join(behav_ind, by = c("geo_value", "time_value")) %>%

as_epi_df()

r <- epi_recipe(jhu) %>%

add_role(geo_value_factor, new_role = "predictor") %>%

step_dummy(geo_value_factor) %>%

step_epi_lag(case_rate, death_rate, lag = c(0, 7, 14)) %>%

step_mutate(

masking = cut_number(masking, 5),

distancing = cut_number(distancing, 5)

) %>%

step_epi_ahead(death_rate, ahead = 7, role = "outcome") %>%

step_center(contains("lag"), role = "predictor") %>%

step_epi_naomit()As a sanity check we can examine the structure of the training data:

glimpse(slice_sample(bake(prep(r, jhu), jhu), n = 6))

#> Rows: 6

#> Columns: 17

#> $ geo_value <chr> "ny", "ca", "ca", "nj", "ca", "tx"

#> $ time_value <date> 2021-10-16, 2021-11-24, 2021-11-28, 2021-12-03,…

#> $ case_rate <dbl> 23.910028, 11.687497, 10.642052, 34.521027, 32…

#> $ death_rate <dbl> 0.1935616, 0.2489327, 0.1596653, 0.1736988, -0.…

#> $ masking <fct> NA, NA, NA, NA, NA, NA

#> $ distancing <fct> NA, NA, NA, NA, NA, NA

#> $ geo_value_factor_fl <dbl> 0, 0, 0, 0, 0, 0

#> $ geo_value_factor_nj <dbl> 0, 0, 0, 1, 0, 0

#> $ geo_value_factor_ny <dbl> 1, 0, 0, 0, 0, 0

#> $ geo_value_factor_tx <dbl> 0, 0, 0, 0, 0, 1

#> $ lag_0_case_rate <dbl> -3.031947, -15.254479, -16.299923, 7.579052, 5.…

#> $ lag_7_case_rate <dbl> -1.452426, -13.683953, -13.892606, -2.516386, 2…

#> $ lag_14_case_rate <dbl> -1.971052, -11.437390, -12.995578, -5.872955, -…

#> $ lag_0_death_rate <dbl> -0.08822027, -0.03284917, -0.12211657, -0.10808…

#> $ lag_7_death_rate <dbl> -0.10521237, -0.08728057, -0.06514517, -0.14829…

#> $ lag_14_death_rate <dbl> -0.05793007, -0.07639427, -0.07022537, -0.13542…

#> $ ahead_7_death_rate <dbl> 0.1950392, 0.1694629, 0.2282487, 0.1753071, 0.1…Before directly predicting the results, we need to add postprocessing

layers to obtain the death counts instead of death rates. Note that the

rates used so far are “per 100K people” rather than “per person”. We’ll

also use quantile regression with the quantile_reg engine

rather than ordinary least squares to create median predictions and a

90% prediction interval.

f <- frosting() %>%

layer_predict() %>%

layer_add_target_date("2022-01-07") %>%

layer_threshold(.pred, lower = 0) %>%

layer_quantile_distn() %>%

layer_naomit(.pred) %>%

layer_population_scaling(

.pred, .pred_distn,

df = pop_dat,

rate_rescaling = 1e5,

by = c("geo_value" = "abbr"),

df_pop_col = "pop"

)

wf <- epi_workflow(r, quantile_reg()) %>%

fit(jhu) %>%

add_frosting(f)

p <- forecast(wf)

p

#> An `epi_df` object, 5 x 7 with metadata:

#> * geo_type = state

#> * time_type = day

#> * as_of = 2023-03-10

#>

#> # A tibble: 5 × 7

#> geo_value time_value .pred target_date .pred_distn .pred_scaled

#> <chr> <date> <qtls(7)> <date> <qtls(7)> <qtls(7)>

#> 1 ca 2021-12-31 [0.182] 2022-01-07 [0.182] [71.8]

#> 2 fl 2021-12-31 [0.354] 2022-01-07 [0.354] [76.1]

#> 3 nj 2021-12-31 [0.651] 2022-01-07 [0.651] [57.8]

#> 4 ny 2021-12-31 [0.703] 2022-01-07 [0.703] [137]

#> 5 tx 2021-12-31 [0.296] 2022-01-07 [0.296] [85.7]

#> # ℹ 1 more variable: .pred_distn_scaled <qtls(7)>The columns marked *_scaled have been rescaled to the

correct units, in this case deaths rather than deaths per

100K people (these remain in .pred).

To look at the prediction intervals:

p %>%

select(geo_value, target_date, .pred_scaled, .pred_distn_scaled) %>%

pivot_quantiles_wider(.pred_distn_scaled)

#> # A tibble: 5 × 10

#> geo_value target_date .pred_scaled `0.05` `0.1` `0.25` `0.5` `0.75` `0.9`

#> <chr> <date> <qtls(7)> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl>

#> 1 ca 2022-01-07 [71.8] 32.4 52.9 62.0 71.8 71.3 53.7

#> 2 fl 2022-01-07 [76.1] 31.4 50.1 62.7 76.1 69.2 43.2

#> 3 nj 2022-01-07 [57.8] 36.3 45.2 52.6 57.8 55.6 42.6

#> 4 ny 2022-01-07 [137] 85.8 110. 124. 137. 134. 98.5

#> 5 tx 2022-01-07 [85.7] 52.7 64.1 76.5 85.7 96.0 94.7

#> # ℹ 1 more variable: `0.95` <dbl>Last but not least, let’s take a look at the regression fit and check the coefficients:

#> Call:

#> quantreg::rq(formula = ..y ~ ., tau = ~c(0.05, 0.1, 0.25, 0.5,

#> 0.75, 0.9, 0.95), data = data, na.action = stats::na.omit, method = ~"br",

#> model = FALSE)

#>

#> Coefficients:

#> tau= 0.05 tau= 0.10 tau= 0.25 tau= 0.50

#> (Intercept) 0.210972402 0.2359826581 0.2664823658 0.2947231936

#> geo_value_factor_fl 0.029646713 0.0164328932 0.0228839635 0.0477642418

#> geo_value_factor_nj 0.007927635 -0.0004083631 -0.0012956427 -0.0022679253

#> geo_value_factor_ny -0.003055366 -0.0045424488 -0.0154494128 -0.0186682895

#> geo_value_factor_tx 0.020163817 0.0260190881 0.0325313901 0.0380479148

#> lag_0_case_rate -0.001497710 -0.0008892893 -0.0011817541 -0.0011301607

#> lag_7_case_rate 0.004540193 0.0042615475 0.0055779628 0.0058296289

#> lag_14_case_rate 0.001401322 0.0007932742 0.0005183414 0.0001946297

#> lag_0_death_rate 0.500930277 0.4897370924 0.4452127352 0.5322549795

#> lag_7_death_rate 0.023373823 0.0856670896 0.0800142120 0.1192945031

#> lag_14_death_rate -0.114626164 -0.0610698031 0.0154793659 -0.0291354449

#> tau= 0.75 tau= 0.90 tau= 0.95

#> (Intercept) 0.3389449098 0.3797560267 0.415298613

#> geo_value_factor_fl 0.0588500157 0.1331501784 0.168089770

#> geo_value_factor_nj -0.0138013295 -0.0137781000 -0.029492405

#> geo_value_factor_ny -0.0101301003 -0.0195144856 -0.037258463

#> geo_value_factor_tx 0.0489007435 0.0749248485 0.073000742

#> lag_0_case_rate -0.0018750320 -0.0029905834 -0.001683478

#> lag_7_case_rate 0.0067506556 0.0060268400 0.007268965

#> lag_14_case_rate 0.0007346599 0.0052740974 0.002825240

#> lag_0_death_rate 0.5119314030 0.2221271697 0.198207000

#> lag_7_death_rate 0.1447297067 0.2473517717 0.218447512

#> lag_14_death_rate -0.0007025566 -0.0003914277 0.122566902

#>

#> Degrees of freedom: 950 total; 939 residualClassification

Sometimes it is preferable to create a predictive model for surges or

upswings rather than for raw values. In this case, the target is to

predict if the future will have increased case rates (denoted

up), decreased case rates (down), or flat case

rates (flat) relative to the current level. Such models may

be referred to as “hotspot prediction models”. We will follow the

analysis in McDonald, Bien, Green, Hu, et al.

but extend the application to predict three categories instead of

two.

Hotspot prediction uses a categorical outcome variable defined in terms of the relative change of compared to . Where denotes the case rates in location at time . We define the response variables as follows:

where

.

We say location

is a hotspot at time

when

is up, meaning the number of newly reported cases over the

past 7 days has increased by at least 25% compared to the preceding

week. When

is categorized as down, it suggests that there has been at

least a 20% decrease in newly reported cases over the past 7 days (a 20%

decrease is the inverse of a 25% increase). Otherwise, we will consider

the trend to be flat.

The expression of the multinomial regression we will use is as follows:

where is either down, flat, or up

Preprocessing steps are similar to the previous models with an

additional step of categorizing the response variables. Again, we will

use a subset of death rate and case rate data from our built-in dataset

covid_case_death_rates.

jhu <- covid_case_death_rates %>%

dplyr::filter(

time_value >= "2021-06-04",

time_value <= "2021-12-31",

geo_value %in% c("ca", "fl", "tx", "ny", "nj")

) %>%

mutate(geo_value_factor = as.factor(geo_value))

r <- epi_recipe(jhu) %>%

add_role(time_value, new_role = "predictor") %>%

step_dummy(geo_value_factor) %>%

step_growth_rate(case_rate, role = "none", prefix = "gr_") %>%

step_epi_lag(starts_with("gr_"), lag = c(0, 7, 14)) %>%

step_epi_ahead(starts_with("gr_"), ahead = 7, role = "none") %>%

# note recipes::step_cut() has a bug in it, or we could use that here

step_mutate(

response = cut(

ahead_7_gr_7_rel_change_case_rate,

breaks = c(-Inf, -0.2, 0.25, Inf) / 7, # division gives weekly not daily

labels = c("down", "flat", "up")

),

role = "outcome"

) %>%

step_rm(has_role("none"), has_role("raw")) %>%

step_epi_naomit()We will fit the multinomial regression and examine the predictions:

wf <- epi_workflow(r, multinom_reg()) %>%

fit(jhu)

forecast(wf) %>% filter(!is.na(.pred_class))

#> An `epi_df` object, 5 x 3 with metadata:

#> * geo_type = state

#> * time_type = day

#> * as_of = 2023-03-10

#>

#> # A tibble: 5 × 3

#> geo_value time_value .pred_class

#> <chr> <date> <fct>

#> 1 ca 2021-12-31 up

#> 2 fl 2021-12-31 up

#> 3 nj 2021-12-31 up

#> 4 ny 2021-12-31 up

#> 5 tx 2021-12-31 upWe can also look at the estimated coefficients and model summary information:

extract_fit_engine(wf)

#> Call:

#> nnet::multinom(formula = ..y ~ ., data = data, trace = FALSE)

#>

#> Coefficients:

#> (Intercept) time_value geo_value_factor_fl geo_value_factor_nj

#> flat -161.3776 0.008663641 -1.310482 1.145751

#> up -146.6629 0.007796713 -0.565096 1.582188

#> geo_value_factor_ny geo_value_factor_tx lag_0_gr_7_rel_change_case_rate

#> flat 25.93757 -0.2681016 20.06018

#> up 26.04283 -0.2679387 34.18244

#> lag_7_gr_7_rel_change_case_rate lag_14_gr_7_rel_change_case_rate

#> flat 33.91462 7.466482

#> up 57.78538 5.116403

#>

#> Residual Deviance: 1152.434

#> AIC: 1188.434One could also use a formula in epi_recipe() to achieve

the same results as above. However, only one of

add_formula(), add_recipe(), or

workflow_variables() can be specified. For the purpose of

demonstrating add_formula rather than

add_recipe, we will prep and bake

our recipe to return a data.frame that could be used for

model fitting.

b <- bake(prep(r, jhu), jhu)

epi_workflow() %>%

add_formula(

response ~ geo_value + time_value + lag_0_gr_7_rel_change_case_rate +

lag_7_gr_7_rel_change_case_rate + lag_14_gr_7_rel_change_case_rate

) %>%

add_model(parsnip::multinom_reg()) %>%

fit(data = b)

#>

#> ══ Epi Workflow [trained] ═══════════════════════════════════════════════════

#> Preprocessor: Formula

#> Model: multinom_reg()

#> Postprocessor: None

#>

#> ── Preprocessor ─────────────────────────────────────────────────────────────

#>

#> response ~ geo_value + time_value + lag_0_gr_7_rel_change_case_rate +

#> lag_7_gr_7_rel_change_case_rate + lag_14_gr_7_rel_change_case_rate

#>

#> ── Model ────────────────────────────────────────────────────────────────────

#> Call:

#> nnet::multinom(formula = ..y ~ ., data = data, trace = FALSE)

#>

#> Coefficients:

#> (Intercept) geo_valuefl geo_valuenj geo_valueny geo_valuetx time_value

#> flat -161.3782 -1.310438 1.145794 25.93743 -0.2680307 0.008663669

#> up -146.6592 -0.565024 1.582236 26.04272 -0.2678949 0.007796513

#> lag_0_gr_7_rel_change_case_rate lag_7_gr_7_rel_change_case_rate

#> flat 20.05896 33.91374

#> up 34.18073 57.78475

#> lag_14_gr_7_rel_change_case_rate

#> flat 7.466884

#> up 5.116729

#>

#> Residual Deviance: 1152.434

#> AIC: 1188.434

#> Benefits of Lagging and Leading in epipredict

The step_epi_ahead and step_epi_lag

functions in the epipredict package is handy for creating

correct lags and leads for future predictions.

Let’s start with a simple dataset and preprocessing:

ex <- filter(

covid_case_death_rates,

time_value >= "2021-12-01",

time_value <= "2021-12-31",

geo_value == "ca"

)

dim(ex)

#> [1] 31 4We want to predict death rates on 2022-01-07, which is 7 days ahead of the latest available date in our dataset.

We will compare two methods of trying to create lags and leads:

p1 <- epi_recipe(ex) %>%

step_epi_lag(case_rate, lag = c(0, 7, 14)) %>%

step_epi_lag(death_rate, lag = c(0, 7, 14)) %>%

step_epi_ahead(death_rate, ahead = 7, role = "outcome") %>%

step_epi_naomit() %>%

prep(ex)

b1 <- bake(p1, ex)

b1

#> An `epi_df` object, 17 x 11 with metadata:

#> * geo_type = state

#> * time_type = day

#> * as_of = 2023-03-10

#>

#> # A tibble: 17 × 11

#> geo_value time_value case_rate death_rate lag_0_case_rate lag_7_case_rate

#> * <chr> <date> <dbl> <dbl> <dbl> <dbl>

#> 1 ca 2021-12-15 15.8 0.154 15.8 18.0

#> 2 ca 2021-12-16 16.3 0.155 16.3 17.4

#> 3 ca 2021-12-17 16.8 0.158 16.8 17.3

#> 4 ca 2021-12-18 17.6 0.164 17.6 17.2

#> 5 ca 2021-12-19 19.0 0.165 19.0 16.3

#> 6 ca 2021-12-20 20.6 0.163 20.6 16.0

#> # ℹ 11 more rows

#> # ℹ 5 more variables: lag_14_case_rate <dbl>, lag_0_death_rate <dbl>, …

p2 <- epi_recipe(ex) %>%

step_mutate(

lag0case_rate = lag(case_rate, 0),

lag7case_rate = lag(case_rate, 7),

lag14case_rate = lag(case_rate, 14),

lag0death_rate = lag(death_rate, 0),

lag7death_rate = lag(death_rate, 7),

lag14death_rate = lag(death_rate, 14),

ahead7death_rate = lead(death_rate, 7)

) %>%

step_epi_naomit() %>%

prep(ex)

b2 <- bake(p2, ex)

b2

#> An `epi_df` object, 10 x 11 with metadata:

#> * geo_type = state

#> * time_type = day

#> * as_of = 2023-03-10

#>

#> # A tibble: 10 × 11

#> geo_value time_value case_rate death_rate lag0case_rate lag7case_rate

#> * <chr> <date> <dbl> <dbl> <dbl> <dbl>

#> 1 ca 2021-12-15 15.8 0.154 15.8 18.0

#> 2 ca 2021-12-16 16.3 0.155 16.3 17.4

#> 3 ca 2021-12-17 16.8 0.158 16.8 17.3

#> 4 ca 2021-12-18 17.6 0.164 17.6 17.2

#> 5 ca 2021-12-19 19.0 0.165 19.0 16.3

#> 6 ca 2021-12-20 20.6 0.163 20.6 16.0

#> # ℹ 4 more rows

#> # ℹ 5 more variables: lag14case_rate <dbl>, lag0death_rate <dbl>, …Notice the difference in number of rows b1 and

b2 returns. This is because the second version, the one

that doesn’t use step_epi_ahead and

step_epi_lag, has omitted dates compared to the one that

used the epipredict functions.

dates_used_in_training1 <- b1 %>%

select(-ahead_7_death_rate) %>%

na.omit() %>%

pull(time_value)

dates_used_in_training1

#> [1] "2021-12-15" "2021-12-16" "2021-12-17" "2021-12-18" "2021-12-19"

#> [6] "2021-12-20" "2021-12-21" "2021-12-22" "2021-12-23" "2021-12-24"

#> [11] "2021-12-25" "2021-12-26" "2021-12-27" "2021-12-28" "2021-12-29"

#> [16] "2021-12-30" "2021-12-31"

dates_used_in_training2 <- b2 %>%

select(-ahead7death_rate) %>%

na.omit() %>%

pull(time_value)

dates_used_in_training2

#> [1] "2021-12-15" "2021-12-16" "2021-12-17" "2021-12-18" "2021-12-19"

#> [6] "2021-12-20" "2021-12-21" "2021-12-22" "2021-12-23" "2021-12-24"The model that is trained based on the recipes functions will predict 7 days ahead from 2021-12-24 instead of 7 days ahead from 2021-12-31.

References

McDonald, Bien, Green, Hu, et al. “Can auxiliary indicators improve COVID-19 forecasting and hotspot prediction?.” Proceedings of the National Academy of Sciences 118.51 (2021): e2111453118. doi:10.1073/pnas.2111453118

Attribution

This object contains a modified part of the COVID-19 Data Repository by the Center for Systems Science and Engineering (CSSE) at Johns Hopkins University as republished in the COVIDcast Epidata API.

This data set is licensed under the terms of the Creative Commons Attribution 4.0 International license by the Johns Hopkins University on behalf of its Center for Systems Science in Engineering. Copyright Johns Hopkins University 2020.